Johns Hopkins’ Education School Reviews Educational Products for Companies

The Education Industry Association is encouraging its members to contract with Johns Hopkins University’s school of education to study the effectiveness of those companies’ products and services.

In a letter to members of EIA—which the organization tweeted out—James D. Giovannini, the association’s executive director, encouraged them to consider how a positive outcome from a Hopkins instructional program design review would help their businesses.

Such a finding could benefit a marketing campaign, as well as investors in a company, and the students who ultimately use the products, he wrote.

Last year, Hopkins’ researchers conducted a study of procurement in K-12 schools commissioned by the Education Industry Association and Digital Promise. One of the findings was that vendors often approach districts without evidence, or credible evidence, that their products work.

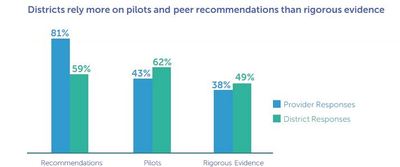

In fact, the “Improving Ed-Tech Purchasing” report, which incorporated feedback from 54 districts and 47 companies, found that schools receive limited “meaningful” evidence of the success of a product when vendors are trying to sell them.

On the other side, districts often don’t trust providers’ evidence when it is available. For instance, 29 percent of technology directors reported being satisfied with the credibility of providers’ evidence.

In his letter, Giovannini indicated that the cost of conducting the reviews has been discounted for EIA members.

At $3,500 to $5,000, an instruction design review is the least expensive of five options available to companies seeking evalutions from Hopkins’ Center for Research and Reform in Education. The evaluation assesses a product’s alignment with instructional design standards and best practices. Among the criteria: the logic of the model; its theoretical framework; the use of evidence-based strategies; customer analyses; instructional objectives; pedagogy; and delivery/user support.

Johns Hopkins would not publish or disseminate the results of design reviews, which are descriptive and lack implementation validation from practitioners, according to Steven M. Ross, a Johns Hopkins professor and evaluation director. It would be up to the company whether to release the report.

The university has offered a variety of evaluation services for the past two years, said Ross, and has delivered five to seven such studies to EIA members in that time.

Other levels of evaluation are available, including:

- A short-cycle evaluation, which use observations, surveys, and interviews with teachers and students in a 10- to 15-week period to determine the effectiveness of a product for broader adoption in a district or schools, at $10,000 to $30,000;

- A case study, involving small, mixed-methods descriptive studies that are more intensive and rigorous than short-cycle studies, for $15,000 to $20,000;

- An efficacy study, which is medium-scale and focuses on how programs and educational offerings operate and affect educational outcomes in try-outs, at $20,000 to $35,000; and

- An effectiveness study, which is a large-scale summative evaluation study that focuses on the success of the program in improving outcomes in rigorous, non-randomized experimental studies or randomized controlled studies, at a cost of $38,000 or more.

Ross said the largest Hopkins product research currently underway with an EIA member is an efficacy/effectiveness study in California of McGraw-Hill’s Reading Wonders. Data collection will continue through June, with a final report due by fall.

In their contracts with Johns Hopkins, companies can request that the university try to publish or publicize the study on the academic institution’s website. Education companies would be making that decision before finding out the results of the research.

Recently, the University of Virginia’s School of Education announced the launch of a the Jefferson Education Accelerator. which will give ed-tech companies the ability to have their products tested in K-12 districts and in colleges through independent reviews.

Like the Johns Hopkins evaluations, it has the potential to give an imprimatur of evidence-based success that vendors can market to districts that are trying to sort through the influx of these products.

And Pearson is working on plans to release efficacy information about its products. That program was announced in 2013, and at a recent Education Writers Association conference, Amar Kumar, Pearson’s senior vice president of efficacy and research, told me that his company would “pull the plug” on a product—even if it’s profitable—if that product is proven not to be efficacious.

- Business, University Venture to Test Ed-Tech Products

- Ed-Tech Vendors Often in Dark on District Needs, Study Shows

- Big-Name Companies Feature Larger-Impact Research Efforts

- Education Giant Pearson to Report ‘Audited Learning Outcomes’

- Ed-Tech Vendors See Hurdles in the K-12 Marketplace

- K-12 District Leaders Evolving Into Smarter Ed-Tech Consumers

- UPenn Innovation Event Features Ed. Industry Players

One thought on “Johns Hopkins’ Education School Reviews Educational Products for Companies”