Search for Quick, Rigorous Ed-Tech Evaluations Underway

UPDATED

Work began this month on a federally-funded project designed to quickly determine “what works” with educational technology, so that schools and districts can make faster decisions about it.

Mathematica Policy Research won the $3.67 million contract to devise tools for rapid—and rigorous—evaluation of ed-tech products. The goal is to come up with a platform where educators can choose a test that will help them determine—within one to three months—how effective a particular ed-tech product is in their schools. SRI International is a partner on the project.

The idea is that the platform will have tools to walk a practitioner, school leader, researcher or app developer through the process of figuring out which research design makes the most sense, and it will ask them questions to help them set it up, said Katrina Stevens, a senior advisor at the U.S. Department of Education’s Office of Educational Technology, which is funding the so-called “rapid-cycle tech evaluation” project.

“We want district and school leaders to have what they need to make evidence-based decisions about the tech they’re using in the classrooms,” she said.

Whatever tools are produced under the contract will be openly licensed, Stevens said. (On Thursday, the education department announced a proposed regulation that would require any digital educational resources developed using federal funding to be openly licensed.)

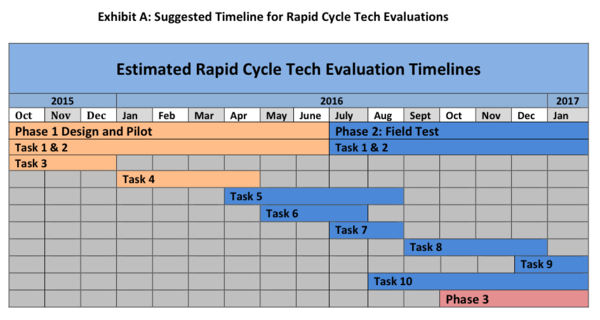

The $1.43 million first and second phases of the project will last 16 months. They will involve producing three to six test pilots “to work out the kinks in the research design,” Stevens said, followed by field tests of eight to 12 ed-tech resources, or apps. The project includes an additional optional phase, which is budgeted at $2.24 million and is as yet unfunded, to conduct large-scale rapid-cycle tech evaluations of up to 60 apps.

Rigorous, Fast Research?

“There’s a perception about rigorous evaluation, that it isn’t applicable to this area,” said Alexandra Resch, an associate director and senior researcher at Mathematica and the project’s director. “One of our goals is to show you can do rigorous research, basically bringing it down to a smaller scale” that will be useful to developers and districts, she said.

By rigorous, Resch means providing enough information that a district could get valuable information about the effectiveness of a product. “The key to that is having some sort of valid comparison group that can give you a sense of what would have happened without that tool,” she said.

Asked whether such fast turn-around evaluations can be done reliably, Resch said there’s precedent in evidence from the White House Social and Behavioral Science Team, which has done an analogous set of research about behavioral interventions. When individuals respond quickly to an intervention, “we can do rapid evaluations,” she said.

On the other hand, “not all technologies might be conducive to this,” she explained.

Resch said the fast timeline for the project is—in itself—ambitious, as she brings together a technical working group representing school districts, developers, and researchers to begin the process.

See also:

- Ed. Department Aims to Accelerate Ed-Tech Evaluations

- K-12 District Leaders Evolving Into Smarter Ed-Tech Consumers

- Ed-Tech Vendors Often in Dark on District Needs, Study Shows

- Regional Partnerships Emerging to Support K-12 Innovation

- Open Ed. Resources Would Get Boost Under Education Department Proposal

UPDATE: This blog post was updated on Nov. 9 to include the fact that SRI International is a partner with Mathematica on this project, and to explain that the optional third phase of the evaluation project could test as many as 60 apps.

I’m amazed, I have to admit. Seldom do I come across a blog that’s both educative and entertaining, and let me tell you, you have hit the nail on the head. The problem is something too few people are speaking intelligently about. I am very happy that I found this in my search for something regarding this.

Thanks a lot for sharing your good web-site.

Hello my loved one! I wish to say that this article is awesome, great written and come with almost all significant infos. I¡¦d like to see extra posts like this .

You really make it seem so easy with your presentation but I find this matter to be really something that I think I would never understand. It seems too complicated and very broad for me. I’m looking forward for your next post, I’ll try to get the hang of it!